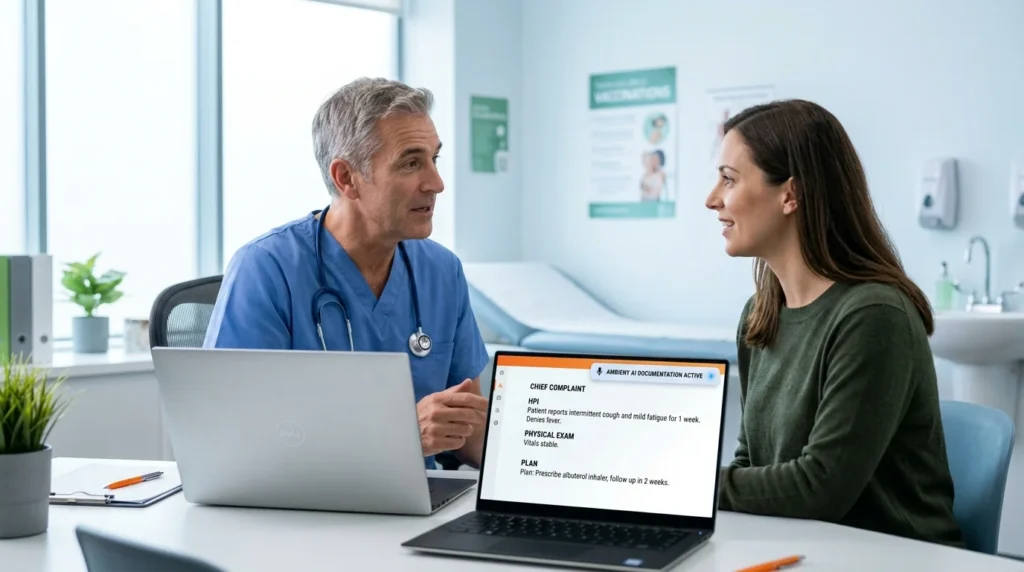

AI clinical documentation implementation timeline for a small clinic takes 2 to 4 weeks from signed agreement to first approved note. That is the honest answer for an independent GP practice, specialty clinic, or allied health practice implementing an ambient documentation system with native EHR integration. It is not 4 to 8 months. It is not a multi-phase enterprise rollout requiring a dedicated IT team. For a small clinic that has selected the right system and configured it to their specific workflows before go-live, the timeline from kickoff to live documentation is 2 to 4 weeks.

This blog explains exactly what happens in each of those weeks, what affects the timeline, what can slow it down, what a realistic first 30 days looks like after go-live, and how the small clinic implementation experience differs from the enterprise timelines that dominate most of the published data on this topic.

Key Takeaways: Implementation and Adoption of AI Documentation

-

1Rapid Deployment Timelines: Small clinics can go fully live in just 2 to 4 weeks using native connectors for Epic or Athena Health. While enterprise systems take longer due to environmental complexity, most small practices see their first AI-generated notes within 10 to 14 days of kickoff.

-

2Age is Not a Barrier: Data from 2.5 million encounters shows no correlation between a physician’s age or experience and their adoption of AI scribes. Success is predicted by workflow fit, template quality, and involving the clinician in the initial configuration.

-

3The Training Requirement: Skipping structured onboarding leads to 30–40% lower adoption rates. Individualized, one-on-one “champion” training is the most significant facilitator for making the technology intuitive and effective for the clinical team.

-

4The Critical 30-Day Window: Long-term habits are formed in the first month. Clinicians who record at least 5 encounters in the first two weeks and receive support for note corrections are significantly more likely to maintain a permanent workflow change.

-

5Measurable Financial ROI: Practices typically see a return of $3.20 for every $1 invested within 14 months. Beyond saving 1–2 hours of daily documentation time, AI adoption is associated with increased RVUs and fewer claim denials due to superior coding capture.

Table of Contents

Why Do Most Clinics Overestimate How Long Implementation Takes?

The published implementation timelines for AI clinical documentation vary so widely that clinic owners often enter the evaluation process with inflated expectations about complexity and duration. The reason is simple: most published data comes from enterprise health system deployments.

Cleveland Clinic rolled out ambient AI documentation to more than 4,000 eligible physicians and advanced practice providers, reaching active use by more than 4,000 of them within 15 weeks. The Permanente Medical Group deployed ambient AI scribes to 3,400 physicians in 10 weeks. These are not comparable to a small clinic implementation. They involve thousands of clinicians, dozens of specialties, complex legacy system integrations, multi-site rollouts, governance committees, and phased change management programmes.

Enterprise implementation timelines, which typically run 12 to 16 weeks according to a 2025 AI clinical documentation implementation analysis, reflect that complexity. They do not reflect what a 2 to 5 provider independent practice experiences when implementing a modern ambient documentation system with a pre-built native connector to their existing EHR.

For small clinics, the implementation timeline is governed by four factors: how quickly the EHR integration can be established, how long template configuration takes, how many clinicians need to be trained and at what depth, and how quickly the clinic can commit internal time to the go-live process. In most small clinic environments, all four of those factors resolve within 2 to 4 weeks.

What Affects the AI Clinical Documentation Implementation Timeline for a Small Clinic?

Before the week-by-week breakdown, it is useful to understand which variables affect the timeline and by how much.

EHR type and integration method. Native integrations with Epic and Athena Health, where the AI documentation system connects directly within the EHR workflow rather than requiring a separate interface, are the fastest to implement. A native connector with pre-built API compatibility can complete technical integration in 2 to 5 business days. Non-native integrations, where the documentation system sits outside the EHR and requires clipboard or manual transfer workflows, take longer and introduce ongoing reliability risks that affect adoption. For small clinics choosing between systems, native EHR integration is the single most important technical requirement for a short implementation timeline.

Number of clinicians and specialties. A single-provider practice or a two-provider practice with the same specialty can complete template configuration and clinician training in a single session. A five-provider practice with multiple specialties requires separate template configurations for each discipline and parallel training sessions. The difference in timeline between a 1-provider and a 5-provider implementation is typically 3 to 5 additional days, not weeks.

Template configuration complexity. Generic SOAP templates can be applied immediately. Specialty-specific templates for disciplines like rheumatology, pain management, orthopaedics, or allied health require additional configuration time to capture the clinical language, note structures, and billing code documentation requirements specific to that discipline. This is worth the time. A template configured for the clinic’s specific clinical language and billing requirements generates notes that support accurate coding from the first approved session. A generic template applied without configuration produces notes that require heavy editing and often does not capture the documentation elements that support accurate E/M coding.

Clinic internal availability. The most common cause of implementation delays in small clinics is not the technology. It is internal scheduling. Configuration sessions require a clinical champion to be available, template review requires clinician time, and go-live requires a week of active clinical use with feedback. If the clinic has a packed schedule with no protected time for implementation tasks, the calendar extends the timeline more than any technical factor.

The Week-by-Week Implementation Timeline for a Small Clinic

Week 1: Discovery, access, and integration setup

What happens: The vendor implementation team completes the initial discovery session to understand the clinic’s EHR environment, note templates, clinical specialties, and workflow. Technical access to the EHR integration is established. For native Epic or Athena integrations, the API connection is configured and tested within the first 3 to 5 business days.

What the clinic does: One clinical champion, typically the lead clinician or practice manager, spends 2 to 3 hours in a configuration discovery session with the implementation team. No other clinician time is required in Week 1. No patients are affected. No clinical workflow changes occur.

What can slow this down: EHR administrator credentials not available on day one. IT security review processes that require additional approval steps. Clinics using legacy EHR systems without pre-built API connectors. If the EHR integration requires a non-native connection, add 3 to 7 business days to Week 1.

What is completed by end of Week 1: EHR integration live and tested in a sandboxed environment. Initial clinical specialty confirmed. Template configuration brief prepared.

For clinics unfamiliar with how the ambient listening technology works before committing to an implementation, what is Ambient Clinical Documentation explains the mechanism in plain terms.

Week 2: Template configuration and clinician orientation

What happens: The vendor team configures clinical note templates to the clinic’s specific workflows, clinical language, and specialty requirements. Templates are built in the AI documentation system to match the note formats the clinicians already use. The clinical champion reviews the draft templates and provides feedback. One round of revisions is standard.

What the clinic does: The clinical champion spends 1 to 2 hours reviewing templates and providing specific feedback on clinical language, section structure, and any specialty-specific requirements. A 45 to 60-minute orientation session is conducted for all clinicians who will use the system. This session covers how the ambient listening works, how to start and end a session, how to review and approve a generated note, and how to flag corrections for the feedback loop.

What can slow this down: Templates requiring multiple revision rounds due to highly specialised clinical language. Clinicians unavailable for orientation during the week. Clinics with three or more specialties requiring parallel template configurations.

What is completed by end of Week 2: Templates configured and approved by clinical champion. All clinicians oriented. EHR integration tested with template output. System ready for supervised go-live.

Week 3: Supervised go-live

What happens: Clinicians begin using the system in live patient encounters. The first 5 to 10 sessions per clinician are the highest-learning-curve sessions. Notes generated in this period are reviewed more carefully, corrections are flagged through the feedback loop, and the vendor support team is actively available for questions.

The goal of supervised go-live is not perfection from the first note. It is establishing the workflow habit and identifying any template adjustments needed based on real encounter data. Minor template adjustments are made during this week based on live clinical feedback.

What the clinic does: Clinicians use the system on every applicable encounter during Week 3. Clinical champion collects feedback from each clinician at end of each day in the first week. Any note corrections or template feedback are submitted through the vendor feedback channel.

What can slow this down: Clinicians inconsistently using the system in the first week. Missing the first 5-session adoption threshold that builds workflow habit. Template adjustments requiring significant reconfiguration rather than minor edits.

What is completed by end of Week 3: All clinicians have completed at least 5 live encounter sessions. Template refinements incorporated. Documentation workflow established for each clinician. System performing at a level that supports independent use.

Week 4: Independent use and first performance review

What happens: Clinicians use the system independently without supervised support. Notes are generated, reviewed, and approved as a standard part of the clinical day. The vendor team conducts a first-month performance check, reviewing note quality metrics, after-hours documentation time (if tracked), and any outstanding template issues.

What the clinic does: Clinicians continue independent use. Clinical champion collects brief end-of-week feedback from each clinician. The practice manager or clinic owner reviews any available metrics on note completion time and after-hours documentation changes.

What is completed by end of Week 4: System fully live. All clinicians independently using AI documentation on all applicable encounters. First performance review completed. Any outstanding template adjustments finalised.

Weeks 5 to 8: Optimisation and adoption solidification

Weeks 5 to 8 are not part of the initial implementation timeline but they are worth understanding because they determine whether the Week 4 live status translates into permanent workflow change.

The most significant adoption risk in AI documentation is not the first two weeks. It is weeks 5 to 12, when initial novelty wears off and inconsistent use habits can develop if the feedback loop is not maintained. Clinicians who receive regular note quality feedback and see measurable time savings data in this period maintain adoption. Clinicians who receive no feedback and cannot see their own time metrics are significantly more likely to reduce use.

For small clinics, the optimisation period should include a monthly check-in with the vendor team, a simple internal tracking mechanism for after-hours documentation time, and a mechanism for clinicians to request template refinements as their clinical language and workflow preferences become clearer through extended use.

What Does the First 30 Days Actually Look Like?

The week-by-week timeline describes what should happen. The first 30 days in reality includes a predictable learning curve that clinic owners should expect and plan for.

Days 1 to 5: The adjustment period. The first encounters feel different. Clinicians are aware of the ambient listening in a way they will not be at Day 30. Notes in this period often require more editing than notes at Day 30. This is normal and expected. It does not indicate a system quality problem. It indicates that the templates have not yet been calibrated to the clinician’s specific vocabulary and clinical patterns. The feedback submitted in this period is the most valuable feedback of the entire implementation.

Days 6 to 14: The calibration period. Template adjustments made from Day 1 to 5 feedback begin producing noticeably better notes. Clinicians start spending less time editing and more time reviewing. After-hours documentation time begins to fall for most clinicians by the end of this period. The 5-session threshold that predicts long-term adoption is typically crossed by Day 10 to 12.

Days 15 to 30: The habit formation period. By Day 15 most clinicians have stopped consciously thinking about the ambient listening and have integrated it into the clinical encounter as naturally as the physical examination. Note review and approval becomes a 2 to 5 minute task rather than a 10 to 15 minute one. The documentation that previously extended past clinic hours is completing within them for the majority of encounters.

A 2025 PMC narrative review of AI scribe implementation across 18 studies found that AI scribes consistently reduce documentation burden and cognitive load, improve workflow efficiency, and save time across clinical settings. The review also noted that frequent documentation omissions and occasional clinically significant hallucinations require active clinician review throughout the implementation period. This is why clinician sign-off remains mandatory and why the supervised go-live period in Week 3 is not optional.

Small Clinic vs Enterprise: Why the Timeline Is Different

The distinction between a small clinic and an enterprise health system implementation matters practically because clinic owners searching for implementation timelines predominantly encounter enterprise data.

| Factor | Small clinic (2 to 4 providers) | Enterprise health system |

|---|---|---|

| Implementation timeline | 2 to 4 weeks | 12 to 16 weeks |

| Clinicians to train | 2 to 5 | 500 to 5,000 |

| EHR integration complexity | Pre-built native connector | Multi-site legacy integration |

| Template configuration | 1 to 3 specialty templates | 20 to 80 specialty templates |

| IT involvement | Minimal, credentials only | Dedicated IT project team |

| Change management | Clinical champion only | Multi-level governance committee |

| Go-live approach | Full practice simultaneously | Phased rollout by department |

| Performance review | Monthly vendor check-in | Quarterly governance review |

Every factor that extends enterprise timelines to 12 to 16 weeks does not apply to a small clinic environment. The 2 to 4 week figure for small clinics is not an aspirational benchmark. It is the standard delivery timeline for a pre-built native integration with a prepared implementation team.

What Can Go Wrong and How Long Does It Add?

An honest implementation timeline includes an honest account of what delays it and by how much.

EHR credential delays: adds 3 to 5 business days. If the clinic does not have EHR administrator credentials readily available or if the EHR vendor requires a formal approval process to grant API access, technical integration is delayed until credentials are provided. This is the most common source of Week 1 extension.

Template revision cycles: adds 2 to 4 business days. If the clinical champion’s first template review generates significant feedback requiring a second full configuration cycle, Week 2 extends. The fix is a more detailed configuration brief in Week 1 so that the first template draft is closer to the clinician’s actual documentation style.

Clinician scheduling conflicts during orientation: adds 3 to 7 business days. If one or more clinicians cannot attend the Week 2 orientation session due to schedule conflicts, go-live is delayed until all clinicians are oriented. The fix is scheduling the orientation date in Week 1, not Week 2.

Inconsistent use during supervised go-live: adds 1 to 2 weeks. If clinicians do not consistently use the system during Week 3, the supervised go-live period extends. The fix is setting a clear minimum use expectation, for example every applicable encounter during the go-live week, with the clinical champion actively supporting this.

Total maximum delay from all four factors combined: 3 to 4 weeks added to the base timeline, producing a worst-case timeline of 6 to 8 weeks for a small clinic with multiple compounding delays. This is still substantially shorter than a standard enterprise deployment.

Without a Structured Implementation vs With a Structured Implementation

Without a structured implementation:

- Vendor provides system access and generic training materials

- Clinicians configure their own templates using default settings

- No supervised go-live period

- First month note quality inconsistent and requires significant editing

- Clinicians use the system on some encounters but not others

- No feedback loop for template corrections

- 30 to 40% lower adoption rate in first 90 days

- Tool abandoned by some clinicians within 3 months

- Documentation improvement partial and unmeasured

With a structured implementation:

- Vendor implementation team leads discovery, configuration, and orientation

- Templates configured to the clinic’s clinical language before first note is generated

- Supervised go-live with active feedback loop in Week 3

- First month note quality improves measurably week on week

- All clinicians using the system on all applicable encounters by Week 4

- After-hours documentation time falling measurably by end of first month

- Adoption self-reinforcing because clinicians can see the time savings in their own workday

- Documentation improvement consistent and visible from Day 30 onwards

The compounding outcome: Clinics with structured implementations reach full adoption in 4 weeks and maintain it. Clinics without structured implementations reach partial adoption in 4 weeks and decline from there. The difference is not the technology. It is the implementation process.

What This Means for Clinic Owners Evaluating AI Documentation in 2026

The question is not whether to implement. The question is what to look for before you do. Physician AI usage jumped from 38% to 66% between 2023 and 2024 according to a 2025 AI clinical documentation analysis. The adoption curve in ambulatory medicine is steep and ongoing. The implementation timeline, 2 to 4 weeks for a small clinic, is no longer a barrier for most practices. The relevant evaluation questions are about EHR integration depth, template configuration capability, and what the structured implementation process looks like.

Native EHR integration is non-negotiable for a short implementation timeline. A system that integrates natively with your EHR and completes technical setup in days rather than weeks compresses the implementation timeline by the largest single factor. Non-native integrations introduce clipboard workflows, reliability risks, and ongoing adoption friction that native integrations do not. Before selecting any AI documentation system, confirm the integration method and verify whether it requires IT involvement beyond credential provision.

Template quality before go-live determines first-month note quality. A generic SOAP template applied without configuration produces notes that require heavy editing and do not support accurate E/M coding from the first encounter. A template configured to the clinic’s clinical language, note structure, and specialty-specific documentation requirements produces notes that require minimal editing and support accurate coding from Day 1. The configuration time in Weeks 1 and 2 determines the note quality in Weeks 3 and 4. Do not skip it.

Age and experience are not adoption barriers. The TPMG analysis found no correlation between clinician age, years in practice, and adoption rates. The barriers to adoption are workflow fit and template quality, both of which are implementation variables, not clinician variables.

The ROI timeline is measurable within the first billing cycle. Clinics that configure templates for coding accuracy before go-live see the revenue impact in the first billing cycle after go-live, not after a learning period. The 5 to 10% revenue improvement from better coding capture cited in AI clinical documentation analysis is available from the first correctly coded encounter, not from some future optimised state.

The full dataset on after-hours charting and clinician turnover costs is in after-hours charting is driving clinician turnover, which covers the 2025 JAMA Network Open and NEJM Catalyst evidence in detail.

The same documentation quality improvements that reduce after-hours charting also address the undercoding and prior authorization documentation gaps that affect revenue in ways that are not visible in standard AR reporting.

MedLaunch Documentation Intelligence implements with a structured 2 to 4 week process for small GP, specialty, and allied health clinics. Native integration with Epic and Athena Health. Templates configured to your clinical language and specialty before the first note is generated. Supervised go-live with active feedback loop. Most clinics see measurable after-hours documentation reduction within the first 30 days.

The full workflow and what the implementation process looks like for your clinic type is on the AI Clinical Documentation Intelligence solution page.

Frequently Asked Questions

How long does it take to implement AI clinical documentation in a small clinic?

A small clinic, defined as 1 to 5 providers with a single EHR system, implements AI clinical documentation in 2 to 4 weeks from kickoff to first independently approved note. Week 1 covers EHR integration and discovery. Week 2 covers template configuration and clinician orientation. Week 3 is supervised go-live. Week 4 is independent use with first performance review. Enterprise health system implementations take 12 to 16 weeks due to multi-site complexity, thousands of clinicians, and legacy integration requirements. That timeline does not apply to small independent practices.

What happens in the first week of AI documentation implementation?

In Week 1, the vendor implementation team conducts a discovery session to understand the clinic’s EHR environment, note templates, clinical specialties, and workflows. Technical EHR integration is established and tested. For native Epic or Athena integrations, this is completed within 3 to 5 business days. The clinical champion spends 2 to 3 hours in the discovery session. No patients are affected and no clinical workflow changes occur in Week 1.

What is the most common cause of implementation delays in small clinics?

The most common causes of delay are EHR credential availability (adds 3 to 5 business days), clinician scheduling conflicts during Week 2 orientation (adds 3 to 7 business days), and inconsistent use during the Week 3 supervised go-live period (adds 1 to 2 weeks). None of these are technology failures. All are resolvable with preparation. Scheduling the clinician orientation date in Week 1 rather than Week 2 eliminates the most common single cause of timeline extension.

Do clinicians need IT skills to use AI clinical documentation?

No. Clinicians using ambient AI documentation do not require technical skills beyond the ability to use their existing EHR. The system listens during the encounter, generates the note, and presents it for review within the EHR interface. The only technical step for the clinician is starting and ending an encounter session within the app. IT involvement in a small clinic implementation is limited to providing EHR administrator credentials for the integration setup. No ongoing IT support is required for standard use.

Does physician age or years in practice affect how quickly they adopt AI documentation?

No. A TPMG analysis of more than 2.5 million patient encounters published in NEJM Catalyst found that AI scribe users had an average age of 47 and were approximately 19 years out of training, with no significant correlation between these factors and adoption rates. The factors that predict adoption success are workflow fit, template quality configured before go-live, and structured support in the first 30 days. Clinicians who struggled most with documentation before implementation are often among the fastest adopters because the time savings are most immediate and most visible to them.

How quickly does a clinic see a return on investment from AI clinical documentation?

Healthcare organisations consistently achieve 1 to 2 hours of daily time savings per provider and 5 to 10% revenue improvements from better coding capture, with typical ROI seen within 14 months, according to a 2025 AI clinical documentation analysis. A 2026 JAMA Network Open cohort study by Holmgren et al. found that ambient AI documentation is associated with measurable changes in RVUs and claim denial rates in favour of adopters. Clinics that configure templates for coding accuracy before go-live see the revenue impact in the first billing cycle, not after an extended learning period.

What is the difference between a native EHR integration and a non-native integration for AI documentation?

A native EHR integration connects the AI documentation system directly within the EHR workflow using a pre-built API connector. The clinician starts and ends sessions within the EHR interface and the approved note is written directly into the patient record without any manual transfer step. A non-native integration requires the clinician to work in a separate interface and copy or transfer the generated note into the EHR. Native integrations complete technical setup in 2 to 5 business days, produce more reliable note transfer, and generate higher adoption rates because they fit the existing workflow rather than adding a step to it. For small clinics choosing between AI documentation systems, native EHR integration is the single most important technical requirement.

Conclusion

The honest answer to “how long does AI clinical documentation take to implement” is 2 to 4 weeks for a small clinic with a pre-built native EHR integration, a structured implementation process, and a clinical champion who can commit a few hours across those weeks.

That answer is not widely available because most implementation data comes from enterprise deployments that bear no resemblance to what a small GP or specialty clinic experiences. The complexity that drives enterprise timelines to 12 to 16 weeks, thousands of clinicians, multi-site legacy integrations, governance committees, and phased change management, does not exist in a small practice environment.

What matters for a small clinic is simpler and more actionable. Native EHR integration or not. Templates configured before go-live or applied generically. Supervised go-live with a feedback loop or unsupported independent launch. Structured first-30-days support or not.

The clinics that implement in 2 to 4 weeks and maintain full adoption are the ones that got those four variables right. The ones that extend to 6 to 8 weeks or see partial adoption are the ones that did not. The technology is the same. The implementation process is the difference.

Ready to know your exact implementation timeline?

Most MedLaunch clinics are fully live in 2 to 4 weeks. See the week-by-week implementation process for your specific clinic type and EHR on our solution page.