Key Takeaways: HIPAA Compliance for Instagram

-

1Platform vs. Implementation:

Instagram itself is not HIPAA-compliant—but using Instagram in a healthcare clinic can be, provided you have the right technical setup to bridge the gap. -

2Legal Accountability:

The obligation sits with your clinic, not with Meta. HIPAA governs how you handle patient data, not which platforms exist in the public sphere. -

3What constitutes PHI in DMs:

A name combined with a health detail in a DM constitutes Protected Health Information (PHI)—even if the patient volunteers it unprompted. -

4The Compliant AI Layer:

The solution is a compliant AI layer that recognizes health queries and keeps PHI out of the DM thread entirely by redirecting to secure channels. -

5Mandatory BAA:

Any vendor you deploy must sign a Business Associate Agreement (BAA). No BAA means no compliant deployment, regardless of how “secure” the software claims to be.

“We’d love to use Instagram for patient communication, but what about HIPAA?”

This is the single most common reason clinic owners pause when they see what Instagram automation can do for appointment bookings and patient engagement. The concern is legitimate. Healthcare is one of the most regulated industries in the world, and the consequences of a HIPAA violation are not abstract fines; reputational damage and OCR investigations are real outcomes that real clinics have faced.

But here is what most people get wrong about HIPAA and Instagram: the regulation does not prohibit clinics from using Instagram. It prohibits the mishandling of protected health information, and those are very different things.

This guide explains exactly where the compliance line sits, what makes a setup compliant versus non-compliant, what a properly configured Instagram AI bot does to protect your clinic, and the specific questions you need to ask any vendor before you deploy. By the end, you will have a clear picture of how to use Instagram as a patient communication channel without putting your clinic at risk.

Table of Contents

1. Does HIPAA Apply to Instagram?

Direct answer: Instagram itself is not a HIPAA-covered entity. But your clinic is, and that is what matters.

HIPAA applies to covered entities (healthcare providers, health plans, and healthcare clearinghouses) and their business associates. Meta, the company that owns Instagram, is not a covered entity under HIPAA. Meta does not sign Business Associate Agreements. Meta’s platform is not designed to meet HIPAA’s technical safeguards requirements.

What this means in practice: the compliance obligation falls entirely on your clinic, not on the platform. If your clinic uses Instagram in a way that involves protected health information, your clinic is responsible for ensuring that information is handled in accordance with HIPAA, regardless of what platform it travels through.

What Counts as PHI in a DM Context?

Under HIPAA, protected health information (PHI) is any individually identifiable health information held or transmitted by a covered entity. In the context of Instagram DMs, PHI is created when a patient’s identity is combined with any health-related detail. Specifically:

- Is PHI: “Hi, I’m Sarah Jones, and I need to book a follow-up for my diabetes medication review.”

- Is PHI: “Can I get a referral for my anxiety treatment? My date of birth is 12/03/1985.”

- Is PHI: A patient sending a photo of test results or a prescription label

- Is NOT PHI: “What are your opening hours?”

- Is NOT PHI: “Do you offer bulk billing?”

- Is NOT PHI: “What’s the address of your Bondi clinic?”

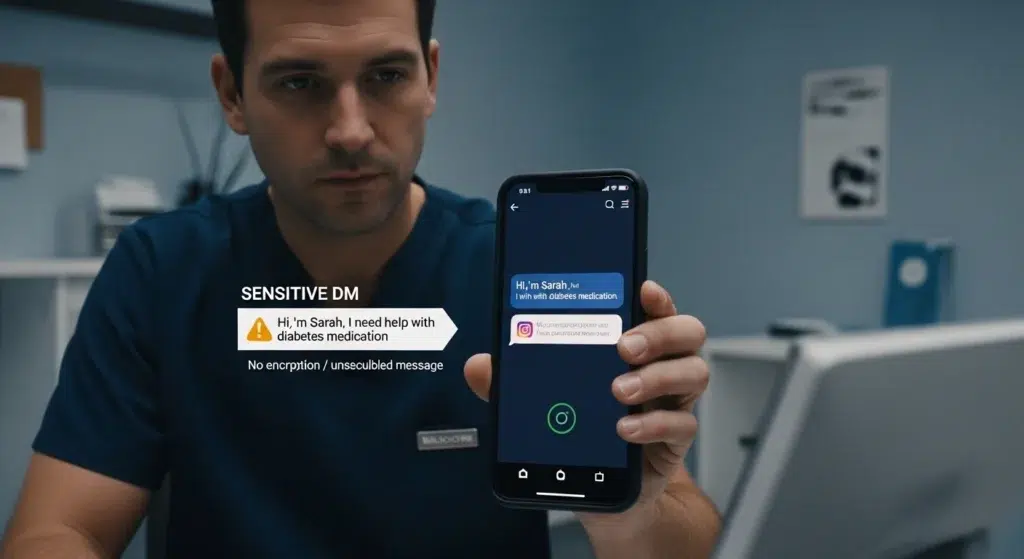

The challenge is that patients do not always keep their inquiries general. A patient who DMs about an appointment will often include clinical details because, from their perspective, that context is helpful. Without a system designed to handle this, that information sits in an unencrypted, non-auditable DM thread. That is where the compliance risk lives.

2. Where the Real Risk Lives

The risk is not Instagram’s existing in your clinic’s workflow. The risk is unmanaged DMs containing PHI with no technical safeguards, no audit trail, no access controls, and no Business Associate Agreement covering the infrastructure.

Consider what an unmanaged Instagram DM inbox looks like from a HIPAA perspective:

- Messages are stored on Meta’s servers, infrastructure, which your clinic has no control over, and no BAA covering

- There is no audit log of who accessed the messages or when

- There are no role-based access controls; anyone with the Instagram login can read every DM

- Messages are not encrypted in a manner that meets HIPAA’s technical safeguards requirements

- There is no breach notification protocol in place if Meta experiences a data incident

❝ The HHS Office for Civil Rights (OCR) does not require a breach to have occurred to issue a penalty. The absence of required safeguards — even if no data was actually misused — is itself a violation.

OCR’s HIPAA enforcement history includes penalties for failures to implement appropriate technical safeguards, failures to conduct risk assessments, and failures to have Business Associate Agreements in place. The fact that the channel involved was a consumer social media platform would not be a mitigating factor; it would likely be an aggravating one.

The conclusion is not that clinics should abandon Instagram. It is that clinics need a layer between the patient DM and their clinic’s operations that manages compliance automatically so PHI never sits unprotected in a consumer messaging inbox.

3. The Difference Between Instagram and a HIPAA-Compliant Instagram Setup

This is the section most compliance guides skip because most compliance guides are written to warn you away from using the channel, not to show you how to use it correctly. The reality is that healthcare clinics can use Instagram as a patient communication channel in a HIPAA-aligned way. The key is understanding what a compliant setup actually looks like.

What Makes Native Instagram DMs Non-Compliant

- No BAA with Meta

- PHI transmitted and stored on Meta’s infrastructure without healthcare-grade safeguards

- No audit trail or access controls

- No breach notification protocol

- No encryption that meets HIPAA Security Rule standards

What a Compliant Instagram Setup Looks Like

A properly configured Instagram AI bot introduces a compliance layer between the patient’s DM and your clinic’s patient data infrastructure. Here is how it works:

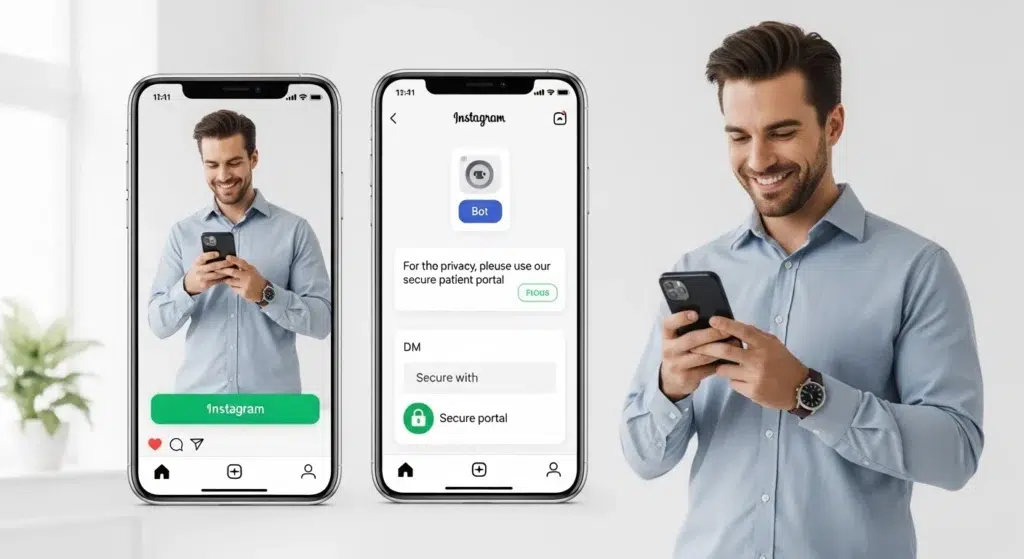

- The bot is the first touchpoint. When a patient DMs your clinic, they interact with the bot not with a staff member accessing Instagram directly. The bot handles the conversation within a defined, compliance-configured scope.

- PHI never enters the DM thread. The bot is configured to redirect any collection of sensitive patient information to a secure, encrypted channel a HIPAA-compliant intake form, a secure messaging portal, or a direct call. The DM thread itself contains only routing-level information: appointment type, preferred timing, and general service inquiries.

- Sensitive data flows through compliant infrastructure. All PHI is collected and stored through the vendor’s BAA-covered systems not through Instagram’s servers. The patient experience is seamless; the compliance architecture runs in the background.

- Audit trails are maintained. Every interaction involving patient data is logged in the vendor’s system with timestamps, access records, and retrievable history. This satisfies HIPAA’s audit control requirements.

- Access controls are enforced. Only authorised staff members can access patient conversation data. Access is role-based and logged.

❝ Think of it this way: Instagram is the waiting room. The compliant system is the consultation room. PHI only ever enters the consultation room — never the waiting room.

This is the architecture behind MedLaunch’s Instagram AI Bot for healthcare clinics. For a broader overview of how this fits into a clinic’s patient communication strategy, see our Complete Guide to Instagram AI Bots for Healthcare.

4. The Business Associate Agreement

A Business Associate Agreement (BAA) is a legally required contract between a HIPAA covered entity (your clinic) and any third party (a business associate) that handles protected health information on the clinic’s behalf. Under HIPAA, no covered entity may share PHI with a vendor without a signed BAA in place.

The HHS provides sample BAA provisions that outline what the agreement must cover. At minimum, a BAA must:

- Define the permitted uses and disclosures of PHI by the business associate

- Require the business associate to implement appropriate safeguards to protect PHI

- Require the business associate to report any breach or security incident to the covered entity

- Require the business associate to return or destroy PHI at the end of the contract

- Require the business associate to ensure any subcontractors also comply with HIPAA

Why Meta Will Never Sign a BAA

Meta does not offer BAAs and has explicitly stated that its platforms are not intended for HIPAA-covered communications. This is not a workaround or a grey area it is a firm position from the platform.

This does not mean Instagram is unusable for healthcare. It means the BAA you need is with your AI bot vendor — the party that actually handles your patient data not with Instagram. The vendor’s compliant infrastructure sits between Instagram and your patient data. Instagram is simply the channel through which the initial conversation begins. The data itself never lives on Instagram.

For a deeper breakdown of how this applies specifically to the booking flow, the HIPAA compliance section of our appointment booking guide walks through the objection in practical terms.

❝ Any vendor who cannot or will not sign a BAA is not appropriate for healthcare deployment. This is not a negotiating point — it is a legal requirement. Walk away.

5. The 5 Technical Safeguards a Compliant Instagram Bot Must Have

HIPAA’s Security Rule requires covered entities and their business associates to implement administrative, physical, and technical safeguards to protect electronic PHI (ePHI). For an Instagram AI bot operating in a healthcare context, these are the five non-negotiable technical requirements:

1. No PHI in the DM Thread

The bot must be configured to prevent the collection or storage of sensitive patient information within Instagram’s native DM infrastructure. Any intake of clinical details, identifiers, or health information must be redirected to a secure, BAA-covered channel. This is the foundational requirement everything else depends on it.

2. End-to-End Encryption

All patient data transmitted between the bot and the clinic’s systems must be encrypted in transit using current standards (TLS 1.2 or higher). Data stored by the vendor must be encrypted at rest. This applies to both the conversation data and any patient records created or updated through the system.

3. Role-Based Access Controls

Access to patient conversation data must be restricted to authorised personnel only. The vendor’s system must support role-based access so a receptionist can see booking confirmations, but cannot access clinical notes, and a clinician can access relevant patient context, but cannot modify billing records. Every access event should be tied to a unique user identity, not a shared login.

4. Audit Logs

HIPAA requires covered entities to maintain records of who accessed ePHI and when. The vendor’s system must generate and retain audit logs for all interactions involving patient data retrievable in the event of an OCR investigation or internal compliance review. Logs should include user ID, timestamp, action taken, and the data accessed.

5. Breach Notification Protocol

Under HIPAA’s Breach Notification Rule, covered entities must be notified of any breach of unsecured PHI without unreasonable delay and no later than 60 days after discovery. Your vendor’s BAA must specify their breach notification obligations, and their internal processes must be designed to detect and report incidents within this window. Ask specifically: what does your incident response process look like, and what is your average time to notify a covered entity of a confirmed breach?

6. What to Ask Any Vendor Before You Deploy

Before signing with any Instagram AI bot vendor for a healthcare deployment, put these questions to them directly. How they answer will tell you everything you need to know about whether they are genuinely built for healthcare or repurposed from a generic customer service tool.

- Will you sign a Business Associate Agreement? The only acceptable answer is yes with a BAA they can provide for review before contract signing, not a promise to produce one later.

- Does your system ever transmit or store PHI through Instagram’s native infrastructure? The answer must be no. Any vendor who is unclear on this point has not designed their system with HIPAA in mind.

- Where is patient data stored, and is it encrypted at rest and in transit? Look for named data centres in compliant jurisdictions, encryption standards (AES-256 at rest, TLS 1.2+ in transit), and clear answers not vague assurances.

- How do you handle a data breach, and what is your notification timeline? They should have a documented incident response process and be able to specify how quickly they would notify your clinic of a confirmed breach.

- What happens to patient data if we end our contract with you? HIPAA requires that PHI be returned or destroyed at contract end. The BAA should specify this. Confirm the vendor’s process and timeline.

- Do you have documented HIPAA compliance procedures we can review? A credible vendor will have a compliance documentation package ready. If they hesitate or say documentation is “in progress,” that is a red flag.

- Have you undergone a third-party security audit or HIPAA risk assessment? Best-in-class vendors have independent verification of their compliance posture. Ask for the most recent assessment date and scope.

MedLaunch’s Healthcare Automation Solutions are built with HIPAA-aligned architecture from the ground up not retrofitted for compliance after the fact. Every deployment includes a BAA, and our compliance documentation is available for review before any contract is signed.

7. What to Do When a Patient Volunteers PHI Unprompted

Even with the best-configured bot, patients will sometimes include clinical details in their first DM without being asked. This is one of the most common edge cases clinic owners raise and it is a legitimate one.

Under HIPAA’s minimum necessary standard, covered entities must make reasonable efforts to limit the use and disclosure of PHI to the minimum necessary to accomplish the intended purpose. OCR does not require perfection it requires reasonable, documented safeguards.

For the specific scenario of a patient sending PHI unprompted via Instagram DM, a well-configured bot should:

- Not store or process the clinical detail: the message is flagged and routed to staff without the PHI being retained in an unprotected system

- Redirect the patient to a secure channel: a response like “For your privacy, please share any clinical details through our secure patient portal [link]” removes the PHI from the DM thread

- Notify the relevant staff member: so the inquiry is followed up through appropriate channels without the clinical detail sitting in the Instagram inbox

Additionally, the bot’s welcome message should include a brief disclaimer: something like “Please don’t share personal health details here for anything clinical, use our secure patient portal or call us directly.” This is not just good practice it creates a documented record that your clinic made reasonable efforts to prevent PHI from entering the channel.

Document your policy. OCR enforcement focuses heavily on what you documented and assessed. A clinic with a written policy, a configured system, and evidence of staff training is in a fundamentally different compliance position than one that has none of these things even if the underlying technology is identical. This principle applies equally to your AI Medical Receptionist and any other automated patient touchpoint in your clinic.

Conclusion

HIPAA does not make Instagram off-limits for healthcare clinics. It makes unmanaged Instagram off-limits. The distinction matters because the clinics treating Instagram as a patient communication channel right now are not ignoring compliance. They have solved it.

A properly configured Instagram AI bot keeps PHI out of the DM thread entirely, routes sensitive data through BAA-covered infrastructure, maintains the audit trails HIPAA requires, and gives your clinic a documented compliance posture that would withstand OCR scrutiny. None of this requires a large IT team, a legal department, or months of implementation work.

What it requires is choosing a vendor who built compliance in from the start not one who bolted it on as an afterthought, and not a generic chatbot tool that was never designed for a clinical environment.

MedLaunch’s Instagram AI Bot is built specifically for healthcare clinics, with HIPAA-aligned architecture, a BAA available before contract signing, and an ongoing support model that keeps your setup compliant as the platform and the regulations evolve.

Frequently Asked Questions

Is Instagram HIPAA compliant for healthcare providers?

Instagram itself is not HIPAA compliant Meta does not sign Business Associate Agreements and its platform does not meet HIPAA’s technical safeguards requirements. However, healthcare clinics can use Instagram in a HIPAA-aligned way by deploying a compliant AI layer that keeps protected health information out of Instagram’s native infrastructure entirely.

Can doctors and healthcare providers use Instagram DMs with patients?

Yes, with the right setup. Clinics can use Instagram DMs for patient communication, provided they do not transmit or store protected health information through Instagram’s native infrastructure. A properly configured Instagram AI bot routes sensitive information through HIPAA-compliant channels while using Instagram DMs only for non-sensitive routing and engagement.

Does HIPAA apply to social media in healthcare?

HIPAA applies to how covered entities handle protected health information regardless of the channel through which that information travels. Social media platforms themselves are not covered entities under HIPAA. But a healthcare provider’s use of social media to communicate with patients falls under the provider’s HIPAA obligations.

How do I make my clinic’s Instagram HIPAA compliant?

The steps are: (1) Deploy a healthcare-specific Instagram AI bot that keeps PHI out of the DM thread and routes sensitive data through compliant channels. (2) Ensure your vendor signs a BAA before any patient data flows through their system. (3) Confirm the vendor’s infrastructure meets HIPAA’s technical safeguards — encryption, access controls, audit logs, breach notification. (4) Add a disclaimer to the bot’s welcome message advising patients not to share clinical details via DM. (5) Document your compliance policy and train relevant staff. (6) Review your setup annually as both the platform and regulations evolve.

What are the HIPAA rules for healthcare social media use?

HIPAA does not have a dedicated social media rule. Its existing Privacy Rule and Security Rule apply to all forms of patient communication, including social media. The key principles: do not post or transmit PHI through unsecured channels, do not use social media in a way that could identify a patient without their explicit authorisation, ensure any vendor handling PHI on your behalf has signed a BAA, and maintain the same technical safeguards for digital patient communication as you would for any other ePHI. The HHS Office for Civil Rights has issued guidance on electronic communications that applies directly to social media use in healthcare settings.

Ready to Launch a HIPAA-Aligned Instagram Bot for Your Clinic?

Stop losing high-intent leads to the “response gap.” MedLaunch provides the secure AI layer and signed BAA you need to book appointments 24/7 without risking patient privacy.