Key Takeaways: AI-Administered PHQ-9 Validity

-

1Inherited Validity: AI-administration is valid when it delivers the standard nine items in their validated order and wording. It inherits the established 88% sensitivity and specificity from the original Kroenke 2001 study rather than creating its own.

-

2Cross-Mode Reliability: Two decades of research show the PHQ-9 maintains its psychometric properties across paper, telephone, and digital modes. A 2025 meta-analysis confirmed high internal consistency (α = 0.86) across diverse delivery formats.

-

3Type A vs. Type B AI: “Type A” AI (like MedLaunch) delivers and scores the validated instrument. “Type B” uses voice biomarkers to predict scores without the actual test. MedLaunch relies on the well-studied validity of administration modes.

-

4The HopeBot Study (2025): Recent evidence found excellent agreement (ICC = 0.91) between self-administered and voice-chatbot-administered PHQ-9. This indicates that patients respond to voice AI with nearly identical reliability as traditional methods.

-

5Reducing Human Error: AI administration improves wording consistency, completion rates, and scoring accuracy. By ensuring no items are skipped or paraphrased and eliminating arithmetic errors, AI reduces the variability often found in human administration.

-

6What It Is Not: AI administration does not turn the PHQ-9 into a diagnostic test, nor does it replace a clinician’s responsibility. It is a screening tool designed to facilitate measurement-based care, not to validate unproven biomarker technologies.

AI-administered PHQ-9 is clinically valid when the validated nine items are delivered in their validated form, scored against their validated thresholds. This guide walks through the evidence behind that answer the foundational PHQ-9 validation literature, the mode-of-administration research, and the most recent direct study on voice-AI administration and is the honest, defensible answer to the validity question every clinician asks during a vendor evaluation.

The clinical director of a mental health practice sits across from a vendor in a 30-minute demo.

The pitch ends. She has one question. “Has this been clinically validated?”

The vendor shows her a graph. She watches them carefully. She has been asked this question herself, by her own clinical staff, every time the practice has adopted a new instrument. She knows what a clinically defensible answer sounds like and what a marketing answer sounds like. She is patient enough to listen to the marketing answer once and decide based on what comes next.

What she wants to hear is a clear distinction between three things: the validity of the PHQ-9 itself as an instrument, the validity of administering it through a particular mode, and the validity of any specific vendor’s implementation. These are three different questions with three different evidence bases. A vendor who collapses them into one cannot be trusted with the rest of what they are selling.

This guide answers all three questions in sequence, with the actual evidence. It is written so that a clinic owner can read it without a clinical background and walk away with a clear understanding of where the validity claim is solid and where it is qualified. It is also written so that a clinical reviewer, vetting MedLaunch on behalf of a practice, can read it and find the claims defensible against the published literature.

The honest answer is yes when AI administration means delivering the validated instrument in its validated form, scored against its validated thresholds. The longer answer is what the rest of this guide is for.

Table of Contents

1. The PHQ-9’s Underlying Clinical Validity Is Well-Established

Any conversation about whether AI-administered PHQ-9 is valid has to begin with the validity of the PHQ-9 itself. Without the foundation, the rest of the argument has nowhere to stand.

The PHQ-9 was developed by Robert L. Spitzer, Janet B. W. Williams, and Kurt Kroenke in the late 1990s, with validation published in the Journal of General Internal Medicine in 2001. The validation study enrolled 6,000 patients across 8 primary care clinics and 7 obstetrics-gynecology clinics. Criterion validity was assessed against an independent structured interview by a mental health professional in a sub-sample of 580 patients.

The headline findings have been the foundation of every PHQ-9 deployment since:

- Sensitivity 88%, specificity 88% for major depression at the standard cutoff score of 10

- Cronbach’s α of 0.89 in the primary care sample, 0.86 in the OB-GYN sample

- Test-retest reliability of 0.84 between clinic-administered and telephone-administered PHQ-9 within 48 hours

- Severity thresholds of 5, 10, 15, and 20 corresponding to mild, moderate, moderately severe, and severe depression respectively

This was not an isolated finding. In the two and a half decades since, the PHQ-9 has accumulated one of the largest and most consistent psychometric validation literatures of any depression screening instrument.

A 2025 reliability generalization meta-analysis published in Discover Mental Health aggregated 60 studies with 232,147 participants. The pooled internal consistency was Cronbach’s α = 0.86 (95% CI 0.85–0.87). Subgroup analyses showed self-administered formats achieving α = 0.87 and interview-based modes achieving α = 0.81 both above the 0.70 threshold conventionally treated as acceptable, and both well above the 0.80 threshold treated as good. Test-retest reliability across eight studies was 0.82.

A separate large-scale 2022 study by Bianchi and colleagues (n > 58,000) confirmed that the PHQ-9’s total score is essentially unidimensional and demonstrates measurement invariance across demographic groups and nationalities. Translation: a PHQ-9 score means the same thing across populations and over time.

The PHQ-9 is endorsed by the National Institute for Health and Care Excellence in the UK for measuring depression severity and treatment responsiveness. It is used in U.S. federal surveillance programs including the Behavioral Risk Factor Surveillance System and the National Health and Nutrition Examination Survey. It is the depression screening instrument cited in U.S. Preventive Services Task Force guidance.

This is the floor under everything else in this post. The PHQ-9 is not a tool whose underlying validity is in question. The question this post addresses is what happens to that validity when administration moves from paper to AI voice.

2. The Validity of “AI Administration” Depends on What That Phrase Means

There are two genuinely different technologies sold under the umbrella “AI PHQ-9” in 2026, and they have very different validity profiles. Treating them as the same thing is the single most common source of confusion in this category and the move that legitimately frustrates clinical reviewers.

Type A — AI delivers the standard PHQ-9 by voice

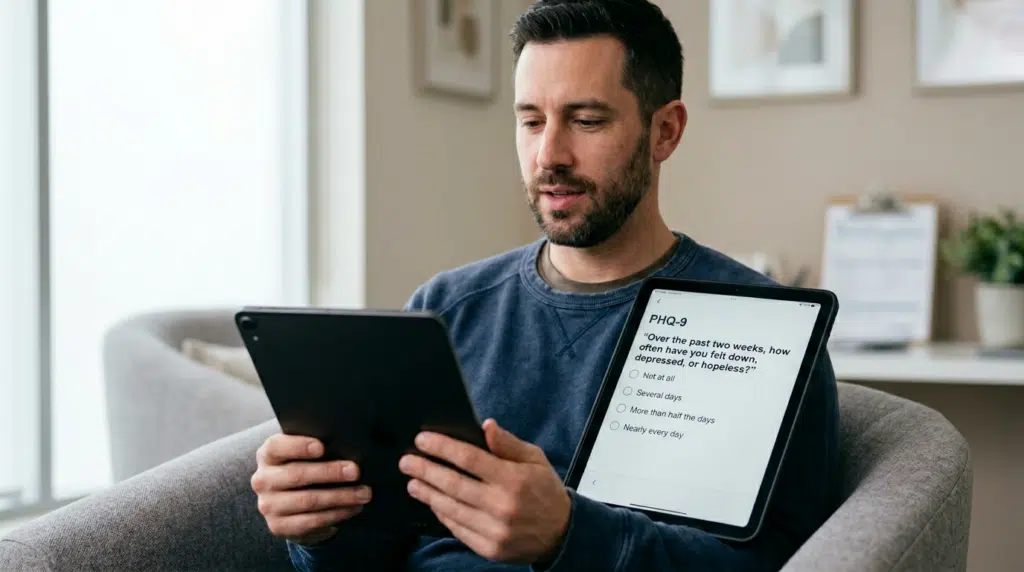

The patient hears each of the nine standard PHQ-9 questions read aloud by an AI voice assistant. The questions are delivered in the validated order, with the validated wording, at the validated response scale (0 = not at all, 1 = several days, 2 = more than half the days, 3 = nearly every day). The patient’s verbal response is captured. The total score is calculated against the validated severity thresholds.

The instrument itself is unchanged. The administration mode has changed.

The validity question that applies to Type A is: does mode of administration affect the validity of the PHQ-9? This is a well-studied question with a substantial published literature. Section 3 of this post addresses it directly.

MedLaunch is a Type A system.

Type B — AI infers a PHQ-9-equivalent score from voice biomarkers

The AI does not administer the PHQ-9. It analyzes the patient’s natural speech pitch, intonation, pauses, and cadence during a different kind of conversation and predicts what the patient’s PHQ-9 score would be if they completed the instrument.

The instrument is not delivered. A predicted equivalent is generated.

The validity question that applies to Type B is fundamentally different: can voice biomarker analysis substitute for the PHQ-9? This is a much earlier-stage research area. Published Type B systems have reported sensitivity and specificity in the 70–75% range, meaningfully below the 88%/88% reported for the PHQ-9 itself. Type B is a legitimate research direction with promising signals, but it is not the same thing as Type A and the evidence base does not transfer.

Why the distinction matters

A clinic evaluating an “AI PHQ-9” vendor needs to know which type they are evaluating. The validity claims appropriate to one are not appropriate to the other. A vendor who is selling Type B but claims the validity of Type A is overclaiming. A vendor who is selling Type A but cannot articulate the difference is signaling that they have not engaged with the literature.

For the rest of this post, when the term “AI-administered PHQ-9” is used, it refers to Type A, the validated instrument delivered by voice. Type B is acknowledged in Section 6 and in the FAQ, but is not the subject of this guide.

3. What Mode-of-Administration Research Shows About the PHQ-9

The PHQ-9 has been studied across multiple administration modes: paper self-report, computerized self-report, in-person interview, telephone interview, and most recently interactive voice. The consistent finding across two decades of this research is that the instrument holds its psychometric properties when the questions are delivered in the validated order with the validated wording.

Telephone vs. in-person administration

The original Kroenke 2001 validation study included a sub-analysis comparing PHQ-9 administered by the patient in the clinic versus PHQ-9 administered by a mental health professional by telephone within 48 hours. The correlation between the two scores was 0.84. Mean scores were nearly identical (5.08 vs. 5.03).

A separate primary care validation study examining telephone administration in detail found a small mean score difference of approximately 0.60 points lower for telephone administration compared to self-administration, a difference the published assessment characterized as “minor and probably lacked clinical relevance,” given that PHQ-9 severity thresholds are spaced 5 points apart.

Self-administered vs. interview-administered across the meta-analytic literature

The 2025 reliability generalization meta-analysis cited in Section 1 examined administration mode as a moderator across its 60-study sample. The findings:

- Self-administered formats: Cronbach’s α = 0.87 (95% CI 0.86–0.88)

- Interview-administered formats: Cronbach’s α = 0.81 (95% CI 0.79–0.84)

Both modes exceed the 0.80 threshold for good reliability. The difference between them is small and likely reflects the unstructured variability that human interviewers introduce, such as paraphrasing, vocal emphasis, follow-up questions, rather than a deficit in either mode.

What the literature does not show

The literature does not show that the PHQ-9 produces meaningfully different scores across delivery modes when the instrument’s content, order, and scoring are preserved. It does not show that one administration mode is clinically superior to another for the purpose the PHQ-9 was designed for: identifying and tracking depression severity in outpatient settings.

The relevant mechanism: the PHQ-9 is a structured, self-report instrument in which each item maps directly to a DSM diagnostic criterion. As long as the patient is asked the validated question, given the validated response options, and the responses are scored against the validated thresholds, the instrument is doing what it was designed to do. The mode is a delivery vehicle. The content is the assessment.

4. The Specific Evidence on AI/Voice-Administered PHQ-9

The mode-of-administration literature establishes that the PHQ-9 holds across paper, telephone, computerized, and interview modes. The remaining question the one this post exists to answer is whether voice AI administration falls within the same envelope.

The most recent direct evidence is the HopeBot study from University College London, published in 2025 as a preprint on arXiv and Research Square.

HopeBot: design and findings

HopeBot is an LLM-based voice chatbot developed at UCL to administer the standard PHQ-9 conversationally, with retrieval-augmented generation supporting the chatbot’s clinical grounding. The study was a within-subject design: 132 adults across the United Kingdom and China completed both the standard self-administered PHQ-9 and the HopeBot voice-chatbot version. The two scores for each participant were compared.

The key findings:

- Intraclass correlation coefficient (ICC, model 3,1) = 0.91 (95% CI 0.88–0.93) interpreted in the published methodology as “excellent agreement”

- Spearman’s rank correlation: ρ = 0.92 (p < 0.001)

- 45% of participants produced identical scores across the two modes

- 71% of the 75 participants providing comparative feedback reported greater trust in the chatbot version than the self-administered version, citing clearer structure, interpretive guidance, and supportive tone

- Mean comfort rating: 8.4/10. Voice clarity: 7.7/10. Handling sensitive topics: 7.6/10.

Honest framing of this evidence

The HopeBot study is currently available as a preprint and has not yet completed peer review at the time of writing. This matters and the post is direct about it: a single 132-person study, even one with strong agreement statistics, is not the same body of evidence as the 60-study meta-analysis underlying the PHQ-9 itself.

What the HopeBot study does establish is a specific, recent, and methodologically clear data point: when a voice-AI system administers the standard PHQ-9 conversationally, the scores agree with self-administered scores at ICC 0.91 a level of agreement that is consistent with what the broader mode-of-administration literature would predict for any well-implemented delivery mode.

This is not the same as saying voice administration is better. It is saying voice administration is not worse. For an instrument as well-established as the PHQ-9, that is the relevant validity question. The instrument’s own validity is the ceiling. Mode of administration determines whether that ceiling is approached or eroded.

The HopeBot data suggests it is approached.

Other published research in the space

Beyond HopeBot, the published research on voice-AI administration of the PHQ-9 specifically is still small. The broader literature on chatbot-administered psychometric instruments is growing rapidly across 2024–2026, and several university research programs (UCL’s HopeBot among them) are running active validation studies. A clinic vetting any specific Type A vendor in 2026 should ask the vendor what published evidence supports their specific implementation, distinct from the broader literature.

5. What AI Administration Adds Beyond Matching Paper Validity

If AI administration only matched paper validity, there would be no reason to deploy it. The validity question is: does AI administration meet the floor? The next question is: does it offer anything beyond it?

The answer is three measurable improvements over manual administration. None of these change the instrument’s ceiling validity. All three reduce sources of variability that exist in human-administered or self-administered paper PHQ-9.

Wording consistency

Every patient receives the questions in the validated order, with the validated wording, at the validated response scale. The interviewer does not paraphrase. The clinician does not abbreviate. The front-desk staff member does not skip the difficulty rating. The wording of the instrument is preserved across every administration, every patient, every visit.

This is not a small thing. The validity studies underlying the PHQ-9 are based on patients being asked the specific questions as written. The further the field administration drifts from that wording, the less the validity literature applies.

Completion consistency

The AI does not skip questions. It does not move on before the patient has responded. It does not assume an answer based on what the patient said earlier. Every administration is a complete, in-order set of nine items plus the difficulty rating the full validated instrument every time.

In manual administration, particularly during high-volume clinic days, partial PHQ-9 responses are common. A score calculated on seven of nine items is not a validated PHQ-9 score. AI administration eliminates this source of incomplete data.

Scoring consistency

Manual scoring of the PHQ-9 introduces a small but non-zero arithmetic error rate. The cumulative effect at the level of a clinic’s annual screening volume, particularly when a miscalculated score crosses a severity threshold, is not negligible. AI administration reduces the scoring error rate to zero (within the bounds of correctly captured responses).

What these improvements are not

These three improvements are not validity improvements in the psychometric sense. The PHQ-9’s sensitivity and specificity, its internal consistency, its test-retest reliability do not change because of administration consistency. The instrument’s own properties remain what they were.

What changes is the fidelity of real-world implementation to the validated administration. AI delivery narrows the gap between the validated PHQ-9 in the literature and the actual PHQ-9 administered in the clinic on a busy Tuesday afternoon. The score the clinician sees is more likely to be the score the validated instrument would have produced. That is a different kind of improvement, but a real one.

6. The Honest Limits: Where the Evidence Does Not Yet Reach

A clinically credible post on this question has to be explicit about what the evidence does not yet support. This section exists for that purpose.

The HopeBot study is one study

The HopeBot study is the most direct evidence we currently have on voice-AI administration of the PHQ-9. It is a 132-person within-subject study, currently a preprint. Its findings are consistent with the broader mode-of-administration literature, which strengthens the inference. But a single study is not a body of evidence, and the literature on voice-AI PHQ-9 specifically will mature over the next two to three years.

Vendor-specific implementations require their own evaluation

The validity inheriting from the PHQ-9 itself, and the supportive evidence from the mode-of-administration literature, applies to any system that delivers the validated instrument in its validated form. It does not validate any specific vendor’s implementation. A clinic evaluating any Type A vendor, including MedLaunch, should ask the vendor what published or internal validation supports their specific system, beyond the literature on the broader category.

Type B is a different technology with different evidence

This post has been about Type A: AI delivering the validated PHQ-9. Type B AI inferring a PHQ-9-equivalent score from voice biomarkers without administering the instrument is a separate technology with a separate, earlier-stage evidence base. Published Type B systems report sensitivity and specificity in the 70–75% range, meaningfully below the PHQ-9’s own 88%/88%. A clinic should not assume Type A’s evidence transfers to Type B, or vice versa.

The PHQ-9 is a screening instrument, not a diagnostic test

This is true regardless of how the PHQ-9 is administered. A score of 18 does not diagnose major depressive disorder. It indicates that the patient meets the screening threshold for moderately severe depression and that a clinical assessment is warranted to make or rule out a diagnosis. AI administration does not change this. The clinical responsibility for diagnosis remains with the clinician.

The validity is for what the instrument was validated for

The PHQ-9 was validated in adult primary care and obstetrics-gynecology populations for the purpose of screening for major depressive disorder and assessing depression severity. It has been subsequently validated in many other populations and settings. AI administration does not extend the instrument’s validity to populations or use cases it was not validated for. If an AI system administers the PHQ-9 to a population the instrument has not been validated in, the validity question reverts to the instrument’s underlying validation in that population, not to the AI system.

There is no FDA approval for AI-administered PHQ-9 because the PHQ-9 itself does not require one

A question that comes up regularly: is AI-administered PHQ-9 FDA-approved? The PHQ-9 itself is a screening instrument, not a diagnostic device, and is in the public domain. It does not require FDA clearance, regardless of administration mode. AI-administered PHQ-9 inherits the same regulatory status. A vendor claiming FDA approval of the PHQ-9 itself would be making an inaccurate claim. A vendor’s broader software platform may have separate regulatory considerations depending on its specific claims and intended use, which a clinic should evaluate independently.

7. What This Means for a Clinic Evaluating MedLaunch

The translation of all of the above to the practical question a clinic owner is actually asking.

MedLaunch is a Type A system. It administers the standard PHQ-9 by voice, the validated nine items, in the validated order, with the validated wording that captures the patient’s verbal responses, scores against the validated severity thresholds, and delivers the result to the clinician’s EHR before the consultation begins. Question 9 alerts route to assigned clinical staff in real time when the response indicates suicidal ideation.

The validity question that applies is the mode-of-administration question, and the relevant evidence is what this post has walked through:

- The PHQ-9’s underlying validity (88%/88% sensitivity/specificity, α = 0.86 across 232,147 patients in the 2025 meta-analysis)

- The two-decade mode-of-administration literature showing the PHQ-9 holds across delivery modes when content, order, and scoring are preserved

- The most recent direct evidence on voice-AI administration (HopeBot, ICC 0.91, currently a preprint)

The clinical responsibility for diagnosis remains with the clinician. The PHQ-9 is and remains a screening instrument. The Question 9 alert flow is a workflow improvement that allows the clinical team to be informed of a suicidal ideation response before the consultation begins, rather than when the chart is opened it does not constitute a clinical claim about voice-based risk prediction.

A clinical reviewer vetting MedLaunch on behalf of a practice will reasonably ask three additional questions beyond the literature this post addresses:

- Does the system deliver the PHQ-9 in its validated form? This is verifiable by reviewing the script the AI uses against the published PHQ-9 instrument.

- Does the system handle Question 9 responses in a way that aligns with the practice’s safety protocol? This is verifiable through the system’s alert configuration and routing.

- What internal or published validation does MedLaunch have for its specific implementation, beyond the broader category literature? This is a question to ask MedLaunch directly.

These are appropriate questions for any vendor in this category. They are the right questions for the clinical reviewer to be asking.

8. Frequently Asked Questions

Is AI-administered PHQ-9 the same as the regular PHQ-9?

When the AI delivers the standard nine PHQ-9 items in the validated order, with the validated wording, scored against the validated severity thresholds, the instrument is identical. The administration mode is different. The mode-of-administration literature on the PHQ-9 indicates that the instrument’s psychometric properties hold across delivery modes when the content, order, and scoring are preserved.

Has AI-administered PHQ-9 been validated in peer-reviewed studies?

The most direct evidence currently available is the HopeBot study from University College London (within-subject design, 132 adults, ICC 0.91 with self-administered PHQ-9), which is available as a preprint at the time of writing and has not yet completed peer review. The broader mode-of-administration literature on the PHQ-9 is well-established across two decades and supports the inference that voice administration falls within the same validity envelope as other delivery modes when the instrument is delivered faithfully.

What about voice biomarker analysis is valid?

Voice biomarker analysis is a different technology from voice administration. Biomarker systems analyze speech patterns (pitch, pauses, cadence) to predict what a PHQ-9 score would be, without administering the instrument. Published biomarker systems report sensitivity and specificity in the 70–75% range, lower than the PHQ-9’s own 88%/88%. These systems represent a legitimate research direction with promising signals, but should not be conflated with voice administration of the validated instrument.

Can the AI miss a positive Question 9 response?

A correctly implemented Type A AI system administers Question 9 verbatim and captures the patient’s response on the same 0–3 scale as the rest of the instrument. Any response other than “not at all” is flagged according to the practice’s safety protocol. The system does not interpret or analyze the response beyond capturing it accurately. The reliability of Question 9 alerting is therefore primarily a function of accurate speech recognition of the patient’s response, which should be a vendor-specific question to the system being evaluated.

Does the AI diagnose depression?

No. The PHQ-9 is a screening instrument, not a diagnostic test. A score above the cutoff indicates that the patient meets the screening threshold and that a clinical assessment is warranted. Diagnosis remains the responsibility of the clinician. This is true regardless of how the PHQ-9 is administered.

Is voice administration appropriate for patients with limited English proficiency, hearing differences, or cognitive concerns?

The PHQ-9 itself has been validated in over thirty languages and across diverse populations. Voice administration as a delivery mode does not extend the instrument’s validity to populations it has not been validated in. For patients with hearing differences, voice administration may not be the appropriate delivery mode, and an alternative (visual, written, or interpreter-supported) administration may be more appropriate. For patients with cognitive concerns, the same validity considerations that apply to self-administered or interview-administered PHQ-9 apply to voice administration.

How does AI administration compare to nurse-administered PHQ-9?

The 2025 reliability generalization meta-analysis found self-administered formats achieving Cronbach’s α = 0.87 and interview-administered formats achieving α = 0.81. Both are above the threshold for good reliability. Nurse-administered PHQ-9, when delivered faithfully, is a valid administration mode. The improvements AI administration adds over manual nurse administration are not validity improvements in the psychometric sense; they are consistency improvements (wording, completion, and scoring fidelity) that reduce real-world drift from the validated instrument.

Is there an FDA approval for AI-administered PHQ-9?

The PHQ-9 itself is a screening instrument in the public domain and does not require FDA clearance. AI administration of the PHQ-9 inherits the same regulatory status as the instrument being delivered is the same. A vendor’s broader software platform may have separate regulatory considerations depending on the specific claims and intended use the vendor makes, which should be evaluated independently of the underlying instrument’s status.

What should we ask any AI PHQ-9 vendor about validity?

Three questions. First: does the system deliver the PHQ-9 in its validated form, the standard nine items, in the validated order, with the validated wording? Second: how does the system handle Question 9 responses, and does the alert flow align with our practice’s safety protocol? Third: what internal or published validation does the vendor have for their specific implementation, beyond the broader literature on PHQ-9 mode of administration?

Is MedLaunch FDA-approved?

MedLaunch’s AI PHQ-9 screening administers the standard PHQ-9 in its validated form. The PHQ-9 itself does not require FDA clearance, as it is a public-domain screening instrument. Questions about MedLaunch’s broader regulatory positioning should be directed to MedLaunch directly.

9. Conclusion

The clinical director in the demo room is asking the right question.

“Has this been clinically validated?” is the question that protects the practice, the clinical staff, and the patients. It is the question that separates well-implemented technology from marketing in a healthcare-tech environment where the line is sometimes blurred deliberately.

The honest answer to that question, for AI-administered PHQ-9 in 2026, has three layers.

The PHQ-9 itself is one of the best-validated screening instruments in psychiatry. Two and a half decades of literature, 232,147 patients across 60 studies in the most recent meta-analysis, sensitivity and specificity of 88% at the standard cutoff, internal consistency of 0.86. This is the floor.

The mode-of-administration literature shows the PHQ-9 holds its psychometric properties when the validated content, order, and scoring are preserved across delivery modes. Self-administered, interview-administered, telephone-administered, and computerized the instrument does what it was designed to do across them all.

The most recent direct evidence on voice-AI administration is consistent with what the broader literature would predict: ICC 0.91 between voice-chatbot-administered and self-administered PHQ-9 in the HopeBot study, currently a preprint. A single study, but a methodologically clear one, and one whose findings sit comfortably inside the envelope established by two decades of mode-of-administration research.

The validity question is answered. The remaining questions does this specific vendor’s implementation deliver the validated instrument faithfully, does the alert flow align with our safety protocol, what does the vendor’s internal validation shows are vendor-specific questions appropriate for the clinical reviewer’s diligence process.

Those are the right questions to ask. They are also the questions a vendor who has done the work will be ready to answer.

Walk through validity, alerts, and integration.

Book a 20-minute call to see how MedLaunch delivers the validated PHQ-9, handles Question 9 alerts within your safety protocol, and integrates with your EHR.